Get your website recovered, functional, and ready-to-install

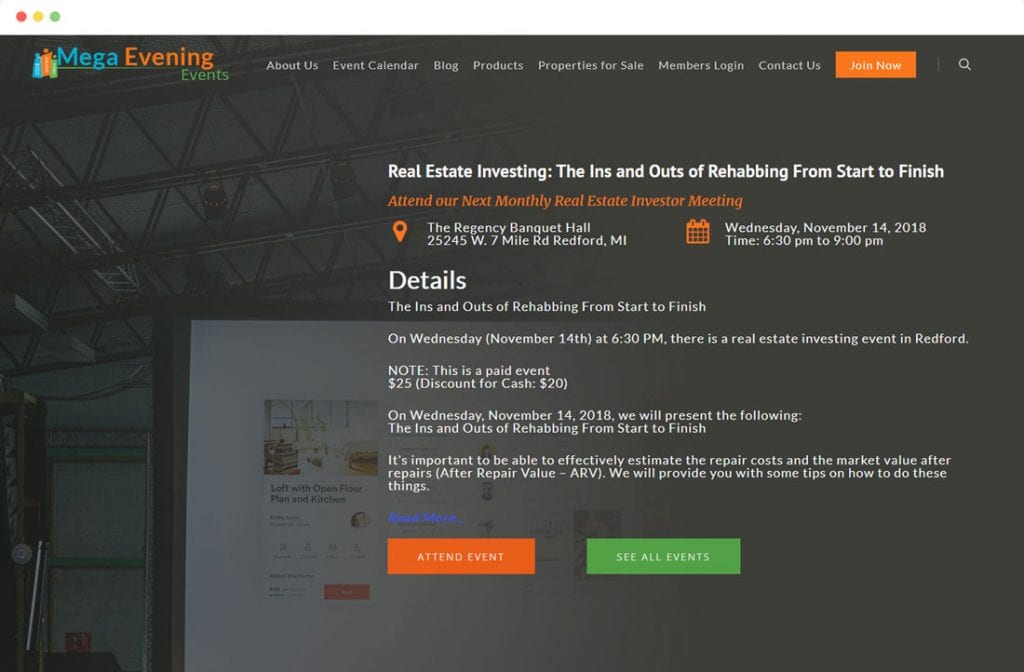

Restore your website from wayback machine with Wayback Revive

Offering amazing web solutions for you!

Wayback Revive will help you to restore your old website from the Wayback machine in no time with minimal effort.

Simple Process from Start to Finish

Just go to the Wayback Machine, choose the version of your website you want recreated (the best-working version with a correct layout and displaying as many files as possible), send us the link, and wait. We will do all the work. You will receive an email containing the download link, and all you will need to do is download the files, unpack them, and upload them to the server.

We get the Job Done

Here's what we offer!

Removal of the Unnecessary

Page linking & Wayback Header

Our customized Software will go through every page to remove all of the Wayback Time Machine codes and at the same time ensure that all of the pages are re-coded to work for you when uploaded to your new server. So fear not that all those pages cannot be linked to each other properly, we assure you they can, and will be.

Everything You’ll Need

File Assets & Low Wait times

When we recover your website from Web Archive we download everything there, or as we call it WYSIWYG (What You See Is What You Get), If you see it we’ll download it*. With that being said 75% of orders are delivered within a hour, up to 24 hours. If your in the 25% of orders that exceed the normal timeframe (varies based on links, content and server speed) We’ll Notify you every step of the way.

Our Core Services

Any custom services you may need regarding Wayback Machine or Restoration?

Feel free to Contact Us.

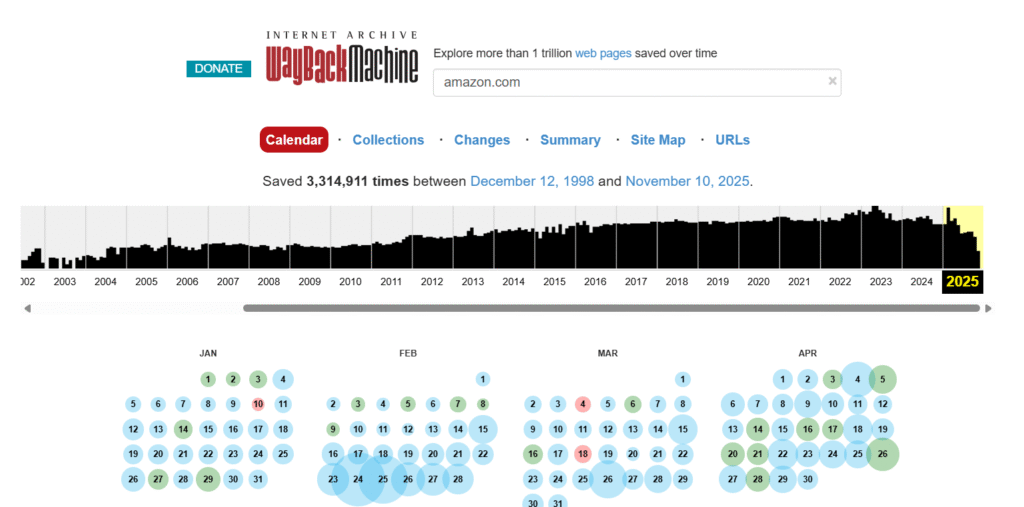

Wayback Machine Downloader What's Broken, What Works, and What to Actually Do

The original hartator Ruby gem — cited in thousands of tutorials, YouTube videos, and Stack Overflow threads — stopped working reliably in 2024. This is the one guide that clearly explains what broke, what to use instead, and when skipping DIY entirely makes more sense.

Why Every Tutorial Recommends a Broken Tool

The hartator/wayback-machine-downloader Ruby gem has 5,800+ GitHub stars and was the only real automated solution for years. It has been largely unmaintained since 2022 and fails reliably in 2024–2026. Nobody explains this clearly. We will.

What made it the standard

It solved a real problem: scrape the Wayback Machine CDX API to get all archived URLs for a domain, then download each one. From 2014–2022, it worked well enough. Every YouTube video, Stack Overflow thread, and blog post pointed to it — so it accumulated stars and became the default recommendation.

The result: thousands of developers follow instructions that are years out of date, waste hours debugging unfixable errors, and give up — assuming they did something wrong. Most tutorials never mention the tool is broken.

Why it stopped working

- 01 Internet Archive rate limiting — IA tightened CDX API rate limits; bulk requests now return 429/400 errors the gem never handles gracefully

- 02 Ruby 3.x SSL behavior changes — Net::HTTP in Ruby 3+ enforces stricter SSL cert verification, causing OpenSSL::SSL::SSLError across many environments

- 03 Maintenance abandoned — open issues go unresolved for 2+ years, PRs unmerged, maintainer inactive. Last meaningful commit: 2021

- 04 No archive footprint cleanup — even when it downloads, every HTML file contains Wayback Machine toolbar scripts and rewritten links. Those need manual removal before deploying anywhere

Connection Refused or Timeout

IA's servers drop or throttle connections from the concurrent request volume the gem makes. Using --concurrency 1 helps somewhat but doesn't resolve the underlying 400 errors. No fix exists in the original gem.

CDX API Query Format Rejected

The Wayback Machine updated their CDX API parameters. The gem sends queries in outdated format and gets rejected — often silently downloading 0 files. Only the community fork with patched API calls can fix this.

SSL / TLS Handshake Failure

Ruby 3.x enforces stricter SSL certificate verification. The gem was written for older Ruby behavior. Common on macOS Sonoma and any system with Ruby 3.2+. Downgrading to Ruby 3.1 via rbenv is the usual workaround.

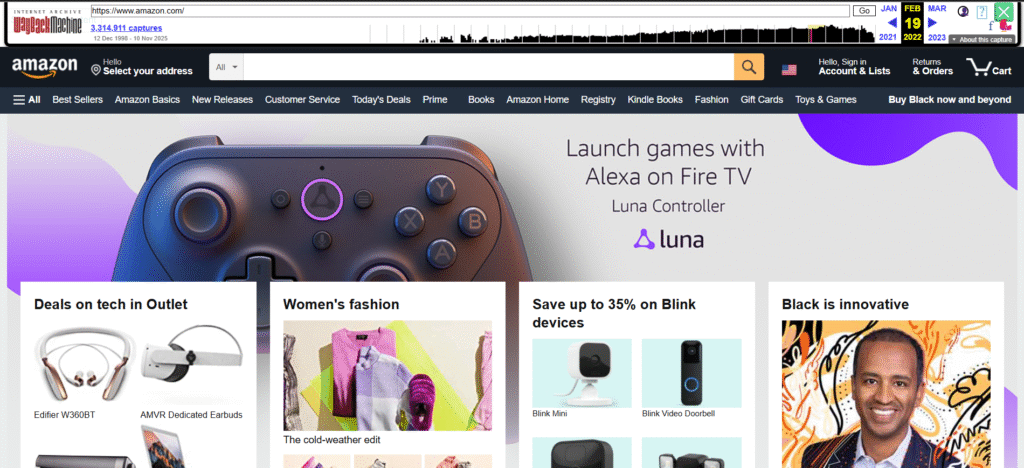

All Wayback Machine Download Methods in 2026

Every meaningful approach tested and scored. No affiliate links, no promotional ratings.

| What matters | hartator gem ⚠ Issues |

Community fork ⚡ Better |

wget / curl 🛠 Manual |

HTTrack 🖱 GUI |

Archivarix 🌐 Web |

Wayback Revive ✓ Full |

|---|---|---|---|---|---|---|

| Works reliably in 2026 | ⚠ Often fails | With effort | Yes | Yes | Yes | ✓ Guaranteed |

| Full site — all pages | ⚠ Incomplete | Usually | With flags | Usually | 200-file free limit | ✓ Complete |

| Images & media recovered | Partial | Partial | Partial | Partial | Partial | ✓ Maximum recovery |

| Clean HTML (no archive code) | ✗ | ✗ | ✗ | ✗ | Partial | ✓ Fully cleaned |

| WordPress CMS delivery | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ +$80 upgrade |

| Sites with 100+ pages | ⚠ High failure rate | Possible, slow | Possible, tedious | Possible, slow | Paid tier required | ✓ No size limit |

| Technical skill required | Ruby + CLI | Ruby, rbenv/rvm | CLI + scripting | Low (GUI available) | None | None — we handle it |

| Cost | Free | Free | Free | Free | Free / paid | $30 HTML · $110 WP |

DIY Guide: Real Commands for Each Method

Each tab gives you working commands, honest limitations, and what you will need to clean up after downloading.

Community Fork — Best CLI Option

The original gem is unmaintained but community forks exist with patches for the CDX API format issues. ShiftaDeband's fork is the most actively maintained as of 2026. Install from GitHub — not the gem registry.

# Step 1: Use Ruby 3.1 — not 3.2/3.3 (SSL issues on newer versions)

# Install rbenv first if needed: https://github.com/rbenv/rbenv

rbenv install 3.1.0 && rbenv global 3.1.0

gem install bundler

# Step 2: Clone the maintained fork — NOT the original gem

git clone https://github.com/ShiftaDeband/wayback-machine-downloader.git

cd wayback-machine-downloader

bundle install

# Step 3: Basic download

bundle exec ruby bin/wayback_machine_downloader http://example.com# Useful flags — reduces errors significantly

# Reduce 429 rate-limiting errors with concurrency 1

bundle exec ruby bin/wayback_machine_downloader http://example.com --concurrency 1

# Target a specific snapshot date range

bundle exec ruby bin/wayback_machine_downloader http://example.com \

--from 20220101 \

--to 20231231 \

--concurrency 1

# Download only HTML (faster — skip images first pass)

bundle exec ruby bin/wayback_machine_downloader http://example.com --only "*.html"

# Specify output directory

bundle exec ruby bin/wayback_machine_downloader http://example.com --directory ./output/Output still needs cleanup. Even when the fork downloads successfully, every HTML file contains the Wayback Machine toolbar script, rewritten internal links pointing to archive.org, and injected meta tags. You will need cleanup scripts before deploying. For 5–10 pages this is manageable; for a full site, plan several hours of scripting work.

Still seeing SSL errors? Confirm you're on Ruby 3.1 (ruby -v). Ruby 3.2+ changes Net::HTTP SSL behavior. If you're getting 0 URLs found, try narrowing the date range with --from and --to — very large ranges sometimes return empty from the CDX API.

wget — No Ruby Required

wget works on every Unix-like system with no dependency setup. You mirror pages directly from web.archive.org. Output is raw archive files — functional but needs significant cleanup work before deploying.

# First: find your snapshot date at web.archive.org — copy the timestamp

# Then mirror with these flags:

wget \

--recursive \

--level=5 \

--page-requisites \

--convert-links \

--no-parent \

--wait=1 \

--random-wait \

--restrict-file-names=windows \

--domains web.archive.org \

"https://web.archive.org/web/20231201000000*/https://example.com/"

# --wait=1 --random-wait → rate limiting protection

# --level=5 → follow links 5 levels deep

# --page-requisites → get all images, CSS, JS for each page

# replace the date/domain with your actual snapshotCleanup required after downloading

Remove the Wayback Machine toolbar script

Every HTML file has a multi-line <!-- BEGIN WAYBACK TOOLBAR INSERT --> block. Must be removed from every page. A Python or sed script can batch this, but the block spans multiple lines making simple regex fragile — expect to write and test carefully.

Fix all internal links

Every link is rewritten to a full archive.org path (e.g. https://web.archive.org/web/20231201/https://example.com/about). These must be converted back to relative or domain paths. Even the --convert-links flag produces archive.org-relative paths, not your-domain paths.

Remove injected meta tags and attributes

Each file has X-Archive-Orig-* attributes, archive-specific meta tags, and WM script attributes on HTML elements. These tell Google the page is an archive copy — harmful to SEO if left in.

Reorganize the file structure

Files download into a deeply nested web.archive.org/web/TIMESTAMP/example.com/ directory. You need to flatten and rename to match your original URL structure before uploading to any web server.

Time estimate: The wget download itself is fast. Cleanup for a 20–30 page site is 3–6 hours for a developer comfortable with scripting. For 100+ pages, plan a full day or more. Non-developers will find cleanup effectively impossible to complete correctly.

HTTrack — Best Option for Non-Developers

HTTrack is a mature website copier with both a GUI (Windows) and CLI (Mac/Linux). The most accessible free option for users not comfortable with terminals. Output quality is similar to wget — archive footprints still need cleanup before deploying.

# Install

# macOS: brew install httrack

# Linux: sudo apt install httrack

# Windows: GUI installer from httrack.com (WinHTTrack)

# CLI: mirror a specific Wayback Machine snapshot

httrack "https://web.archive.org/web/20231215000000/https://example.com/" \

-O "/output/folder" \

"+*web.archive.org/web*example.com*" \

--near --mirror

# The scan rule (+*example.com*) stops it following unrelated archive.org links

# Replace the timestamp and domain with your actual targetUsing WinHTTrack GUI (Windows)

Download and install WinHTTrack from httrack.com

Free installer for Windows. Launch it, create a new project, set your output folder.

Enter your Wayback Machine snapshot URL

Use the full timestamped archive URL: https://web.archive.org/web/20231215000000/https://yoursite.com/. Find your snapshot date at web.archive.org first, copy the URL with timestamp.

Add a scan rule to limit scope

Add +*yoursite.com* as a scan rule. This prevents HTTrack following links out to unrelated archive.org pages and downloading far more than you need.

Run and clean the output

Same cleanup as wget: toolbar scripts, rewritten links, and archive meta tags must be removed before deployment. HTTrack's advantage is the GUI — output quality is similar to wget.

Windows users: WinHTTrack is the most beginner-friendly option in this guide — no terminal required. The limiting factor is still output cleanup after downloading, which requires scripting regardless of which tool you used. If you're not comfortable with that step, our professional service handles everything from download to delivery.

When DIY Makes Sense — and When It Doesn't

The free tools work. But there's a gap between "downloaded some files" and "have a clean deployable website." Here's where that gap becomes a real day-waster.

Sites with 50+ pages

Archive footprint cleanup is a per-file task. A sed script handles a small site in an hour. At 100+ pages, you're writing, testing, and debugging custom scripts for a full day — then still verifying each page manually before the site is usable.

You need clean, deployable output

Every downloaded page has archive toolbar code, links pointing to archive.org, and injected meta tags. Miss any of it and Google sees an archive mirror rather than a real website. Thorough cleanup requires scripting skill and careful verification across every file.

You need WordPress — not static HTML

All free tools give you raw HTML files. Moving content into a working WordPress CMS with pages in Classic Editor, images in the media library, and navigation working is a completely separate process no free tool handles automatically.

- ✓Your site has fewer than 10–15 pages

- ✓You're comfortable writing cleanup scripts

- ✓You just need the content — not a live deployable site

- ✓You want to verify your site is archived before spending money

- ✓Your site has more than 15 pages

- ✓You need a working, uploadable site — not raw files

- ✓You need original URL slugs preserved for SEO recovery

- ✓You've already spent over an hour on this

- ✓You need WordPress delivery

Not sure if your site was ever archived?

Free checker — enter your domain, see snapshot count and restore quality in 30 seconds.What Professional Restoration Includes

No downloads, no cleanup scripts, no Ruby version debugging. You send us your archive URL. We deliver a clean, deployable website.

What's included in the service

- ✓All pages downloaded from the best available snapshot

- ✓Every archive.org toolbar script and injected banner removed

- ✓All internal links fixed — your domain, not archive.org paths

- ✓Images recovered and correctly linked inside each page

- ✓Original URL slugs preserved — existing backlinks still work

- ✓Original meta titles and descriptions kept intact

- ✓Works from any original platform — WP, HTML, Joomla, anything

- ✓Detailed recovery report — every page accounted for

- ✓Optional: delivered as a working WordPress CMS (+$80)

Wayback Revive — Professional Restoration

Flat rate · No hidden fees · Any site, any size

1–2 day delivery

3–5 day delivery

Frequently Asked Questions

Everything about Wayback Machine downloaders, DIY limitations, and professional restoration.

Done debugging.

Get your site back today.

$30 gets you clean, deployable HTML files. $110 gets you a working WordPress site. 100% money-back if we can't find your site in the archive.

Whats Included with Every Recovery!

Wayback Header Removed

We bet you’ve tried downloading your site yourself and got that Pesky Header on every page, with your service we’ll make sure its all removed.

Up to 10 Levels of Deep Pages

When we download your site, we’ll download up to ten pages deep to ensure your website is as exact as it once was. We make sure its all there for you.

Original URL’s

When you get your downloaded site, all of the original URL’s will be written to the file so to help better your chances of keeping your SERP’s Ranking.

Website Assets

Not only are the pages downloaded, the CSS, images, Flash, Javascripts and Videos are Downloads as Well.

Pages Correctly Link

Don’t worry about the pages linking as our software rewrites the link structure on the fly to make sure each page is routed to the correct page.

Free FTP Upload

No Idea how to upload the site after its downloaded? No worries, just email us and we’ll upload it for you at no extra cost.

Detailed Download Report

With each Website download, you’ll receive a detailed report of everything that was downloaded and not downloaded

Fast Turnaround Time

Usually it only take less than an hour for small sites and a few hours for larger sites. (for wordpress conversions its 24-36 hours)

30 Day File Retention

We hold your recovery for 30 days just incase you arent able to download and restore the site right away.